Redundant Array of Inexpensive Disks (RAID) is an implementation to either improve performance of a set of disks and/or allow for data redundancy. Reading and writing performance issues can be helped with RAID. RAID is made up of various levels. This article covers RAID Level 10 and how to implement it on a Linux system.

RAID 10 Overview

RAID 10 is sometimes referred to as a Stripe of Mirrors or RAID 1 + 0. The disks are divided into groups of two disks. The two disks are mirrored while each group is striped among each group. The redundancy provided is from the mirroring of the drives. When disks are added, they should be added in pairs to provide two disks for each group so no group is without redundancy.

HARDWARE

For RAID 10, a minimum of four disks is required. Redundancy allows for the loss of one drive per group to fail and the data is still accessible. The downside of RAID 10 is that combining all the drive space of all disks in the Array, only half is actually available for data. Mirroring reduces the available space by half, but provides excellent redundancy.

To create the RAID Array, I will use four USB drives called BLUE, ORANGE, GREEN and LEXAR. Three of the drives are Sandisk Cruzer Switches which are USB 2.0 compliant and have a storage of 4 GB (3.7GB). The LEXAR is a PNY Attache and is USB 2.0 compliant, but is 8 GB (7.47 GB) in size.

NOTE: When dealing with RAID arrays, all disks should be the same size. If they are not, they must be partitioned to be the same size. The smallest drive in the array sets the usable size of all of the disks.

I placed all four USB sticks in the same hub and tested the read and write speed. A file was written to and read from each and timed. The size of the file was 100 MB and took an average time of 18.75 seconds making the average write speed 1.33 MB/sec. I performed a read test and had an average read time of 4.5 seconds making the average read time of 5.56 MB/sec.

To set up the RAID Array you use the command 'mdadm'. If you do not have the file on your system you will receive an error in a terminal when you enter the command 'mdadm'.

To get the file on your system use Synaptic, or the like, for your Linux distro.

Once installed you are ready to make a RAID 10 Array.

Creating the RAID Array

Open a terminal and type 'lsblk' to get a list of your available drives. Make a note of the drives you are using so you do not type in the wrong drive and add it to the Array.

NOTE: Entering the wrong drive can cause a loss of data.

From the listing of the command from above I am using sdc1, sdd1 sde1 and sdf1. The command is as follows:

sudo mdadm --create /dev/md0 --level=10 --raid-devices=4 /dev/sdc1 /dev/sdd1 /dev/sde1 /dev/sdf1 --verbose

The command creates (--create) a RAID Array called md0. The RAID Level is 10 and four devices are being used to create the RAID Array – sdc1, sdd1, sde1 and sdf1.

The following should occur:

mdadm: layout defaults to n2

mdadm: layout defaults to n2

mdadm: chunk size defaults to 512K

mdadm: /dev/sdc1 appears to be part of a raid array: level=raid0 devices=0 ctime=Wed Dec 31 19:00:00 1969

mdadm: partition table exists on /dev/sdc1 but will be lost or meaningless after creating array

mdadm: /dev/sdd1 appears to be part of a raid array: level=raid0 devices=0 ctime=Wed Dec 31 19:00:00 1969

mdadm: partition table exists on /dev/sdd1 but will be lost or meaningless after creating array

mdadm: /dev/sde1 appears to be part of a raid array: level=raid0 devices=0 ctime=Wed Dec 31 19:00:00 1969

mdadm: partition table exists on /dev/sde1 but will be lost or meaningless after creating array

mdadm: /dev/sdf1 appears to be part of a raid array: level=raid0 devices=0 ctime=Wed Dec 31 19:00:00 1969

mdadm: partition table exists on /dev/sdf1 but will be lost or meaningless after creating array

mdadm: size set to 3907072K

mdadm: largest drive (/dev/sdc1) exceeds size (3907072K) by more than 1%

Continue creating array?

NOTE: If you get an error that the device is busy then remove 'dmraid'. In a Debian system use the command 'sudo apt-get remove dmraid' and when completed, reboot the system. After the system restarts try the 'mdadm' command again. You also have to use 'umount' to unmount the drives.

Answer 'y' to the question to 'Continue creating array?' and the following should appear:

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md0 started.

The RAID Array is created and running, but not yet ready for use.

Prepare md0 for use

You may look around, but the drive md0 is not to be found. Open the GParted application and you will see it there ready to be prepared for use.

By selecting /dev/md0 you will get an error that no Partition Table exists on the RAID Array. Select Device from the top menu and then 'Create Partition Table…'. Specify your partition type and click APPLY.

Now, create the Partition and select your file format to be used. It is suggested to use either EXT3 or EXT4 for formatting the Array. You may also want to select the RAID Flag. Add the Partition scheme. I gave a Label of “RAID10” and then clicked APPLY to make all the selected changes. The drives should be formatted as selected and the RAID Array is ready to be mounted for use.

Mount RAID Array

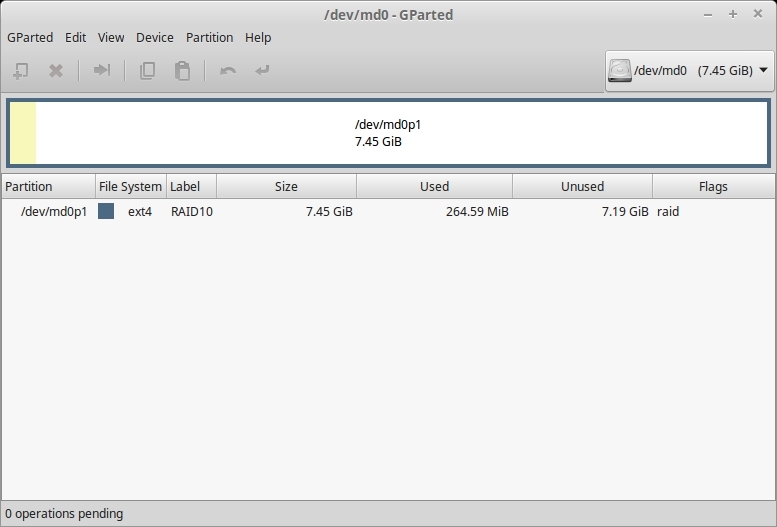

Before closing GParted look at the Partition name as shown in Figure 01. My Partition name is '/dev/md0p1'. The partition name is important for mounting.

FIGURE 01

You may be able to simply mount 'RAID10' as I was able to do.

If the mount does not work, then try the following: go to your '/media' folder and as ROOT create a folder, such as RAID, to be used as a mount point. In a terminal use the command 'sudo mount /dev/md0p1 /media/RAID' to mount the RAID Array as the media device named RAID.

Now you must take ownership of the RAID Array with the command:

sudo chown -R jarret:jarret /media/RAID

The command uses my username (jarret) and group name (jarret) to take ownership of the mounted RAID Array. Use your own username and mount point.

Now, when I write to the Raid Array my time to write a 100 MB file is an average of 11.33 seconds. The speed to write is now 8.83 MB/sec. Reading a 100 MB file from the RAID Array takes an average of 4 seconds which makes a speed of 25 MB/sec.

As you can see the speed has dramatically changed (write: 1.33 MB/s to 9.09 MB/s and read: 5.56 MB/s to 25 MB/s).

NOTE: The speed may be increased by placing each drive on a separate USB ROOT HUB. To see the number of ROOT HUBs you have and where each device is located use the command 'lsusb'.

Auto Mount the RAID Array

To have the RAID Array auto mount after each reboot is a simple task. Run the command 'blkid' to get the needed information from the RAID Array. For example, to run it after I mounted my RAID mount point, I would get the following:

/dev/sda2: UUID="73d91c92-9a38-4bc6-a913-048971d2cedd" TYPE="ext4"

/dev/sda3: UUID="9a621be5-750b-4ccd-a5c7-c0f38e60fed6" TYPE="ext4"

/dev/sda4: UUID="78f175aa-e777-4d22-b7b0-430272423c4c" TYPE="ext4"

/dev/sda5: UUID="d5991d2f-225a-4790-bbb9-b9a48e691061" TYPE="swap"

/dev/sdb1: LABEL="My Book" UUID="54D8D96AD8D94ABE" TYPE="ntfs"

/dev/sdd1: UUID="7446-789A" TYPE="vfat" LABEL="Green"

/dev/sde1: LABEL="ORANGE" UUID="B30C-4661" TYPE="vfat"

/dev/sdf1: LABEL="ORANGE" UUID="77FC-1664" TYPE="vfat"

/dev/sdc1: UUID="7889-96F6" TYPE="vfat" LABEL="LEXAR"

/dev/md0p1: LABEL="RAID10" UUID="149de165-38e1-40c5-9ee0-0bdb89277fd4" TYPE="ext4"

The needed information is the line with the partition '/dev/md0p1'. The Label is RAID10 and the UUID is '149de165-38e1-40c5-9ee0-0bdb89277fd4' and the type is EXT4.

Edit the file '/etc/fstab' as ROOT using an editor you prefer and add a line similar to 'UUID= 149de165-38e1-40c5-9ee0-0bdb89277fd4 /media/RAID ext4 defaults 0 0'. Here the UUID is used from the blkid command. The mount point of '/media/RAID' shows where the mount point is located. The drive format of ext4 is used. Use the word 'defaults' and then '0 0'. Be sure to use a TAB between each set of commands.

Your RAID 10 drive Array should now be completely operational for use.

Removing the RAID Array

To stop the RAID Array you need to unmount the RAID mount point then stop the device 'md0p1' as follows:

sudo umount -l /media/RAID

sudo mdadm --stop /dev/md0p1

Once done you need to reformat the drives and also remove the line from /etc/fstab which enabled it to be be automounted.

Fixing a broken RAID Array

If one of the drives should fail you can easily replace the drive with a new one and restore the data to it.

Now, let's say from the above drive sde1 fails. If I enter the 'lsblk' command the drive sdb1 and sdd1 are shown and still listed as 'md0p1'. The device RAID is still accessible and usable. The Fault Tolerance is unavailable since only the two drives remain.

To determine the faulty drive use the command: 'cat /proc/mdstat' to get a similar result of:

md0 : active raid10 sdf1[3] sde1[2](F) sdd1[1] sdc1[0] 7814144 blocks super 1.2 512K chunks 2 near-copies [4/3] [UU_U]

unused devices: <none>

The line that shows (F) shows the drive which was the failure – sde1, so you know to remove the failed drive and replace it.

To fix a broken RAID Array replace the failed drive with a new drive that has a minimum space of the previous drive. After adding a new drive run 'lsblk' to find the address of the new drive. Say, for example it is 'sdg1'; so, unmount the new drive by using its label with the command 'umount /media/jarret/label'.

To join the new drive to the existing broken RAID 1 Array, the command is:

sudo mdadm --manage /dev/md0p1 --add /dev/sdg1

The RAID partition name is 'md0p1', as shown previously in GParted. The device to add is 'sdg1'.

To see the progress of the rebuild use the command 'cat /proc/mdstat' to see something like:

md0 : active raid10 sdg1[4] sdf1[3] sde1[2](F) sdd1[1] sdc1[0]

7814144 blocks super 1.2 512K chunks 2 near-copies [4/3] [UU_U]

[>....................] recovery = 1.0% (40704/3907072) finish=9.4min speed=6784K/sec

unused devices: <none>

At any time the command 'cat /proc/mdstat' can be used to see the state of any existing RAID Array.

If you must remove a drive you can tell the system that the device has failed. For instance, if I wanted to remove drive sde1 because it was making strange noises and I was afraid it would fail soon, the command would be:

sudo mdadm --manage /dev/md0p1 --remove /dev/sde1

The command 'cat /proc/mdstat' should show the Array has failed. Before you just unplug the device you need to tell the system to remove it from the Array.

You can now remove the drive, add a new one and rebuild the Array as described above.

Hope this helps you understand the RAID 10 Arrays. Enjoy your RAID Array!

RAID 10 Overview

RAID 10 is sometimes referred to as a Stripe of Mirrors or RAID 1 + 0. The disks are divided into groups of two disks. The two disks are mirrored while each group is striped among each group. The redundancy provided is from the mirroring of the drives. When disks are added, they should be added in pairs to provide two disks for each group so no group is without redundancy.

HARDWARE

For RAID 10, a minimum of four disks is required. Redundancy allows for the loss of one drive per group to fail and the data is still accessible. The downside of RAID 10 is that combining all the drive space of all disks in the Array, only half is actually available for data. Mirroring reduces the available space by half, but provides excellent redundancy.

To create the RAID Array, I will use four USB drives called BLUE, ORANGE, GREEN and LEXAR. Three of the drives are Sandisk Cruzer Switches which are USB 2.0 compliant and have a storage of 4 GB (3.7GB). The LEXAR is a PNY Attache and is USB 2.0 compliant, but is 8 GB (7.47 GB) in size.

NOTE: When dealing with RAID arrays, all disks should be the same size. If they are not, they must be partitioned to be the same size. The smallest drive in the array sets the usable size of all of the disks.

I placed all four USB sticks in the same hub and tested the read and write speed. A file was written to and read from each and timed. The size of the file was 100 MB and took an average time of 18.75 seconds making the average write speed 1.33 MB/sec. I performed a read test and had an average read time of 4.5 seconds making the average read time of 5.56 MB/sec.

To set up the RAID Array you use the command 'mdadm'. If you do not have the file on your system you will receive an error in a terminal when you enter the command 'mdadm'.

To get the file on your system use Synaptic, or the like, for your Linux distro.

Once installed you are ready to make a RAID 10 Array.

Creating the RAID Array

Open a terminal and type 'lsblk' to get a list of your available drives. Make a note of the drives you are using so you do not type in the wrong drive and add it to the Array.

NOTE: Entering the wrong drive can cause a loss of data.

From the listing of the command from above I am using sdc1, sdd1 sde1 and sdf1. The command is as follows:

sudo mdadm --create /dev/md0 --level=10 --raid-devices=4 /dev/sdc1 /dev/sdd1 /dev/sde1 /dev/sdf1 --verbose

The command creates (--create) a RAID Array called md0. The RAID Level is 10 and four devices are being used to create the RAID Array – sdc1, sdd1, sde1 and sdf1.

The following should occur:

mdadm: layout defaults to n2

mdadm: layout defaults to n2

mdadm: chunk size defaults to 512K

mdadm: /dev/sdc1 appears to be part of a raid array: level=raid0 devices=0 ctime=Wed Dec 31 19:00:00 1969

mdadm: partition table exists on /dev/sdc1 but will be lost or meaningless after creating array

mdadm: /dev/sdd1 appears to be part of a raid array: level=raid0 devices=0 ctime=Wed Dec 31 19:00:00 1969

mdadm: partition table exists on /dev/sdd1 but will be lost or meaningless after creating array

mdadm: /dev/sde1 appears to be part of a raid array: level=raid0 devices=0 ctime=Wed Dec 31 19:00:00 1969

mdadm: partition table exists on /dev/sde1 but will be lost or meaningless after creating array

mdadm: /dev/sdf1 appears to be part of a raid array: level=raid0 devices=0 ctime=Wed Dec 31 19:00:00 1969

mdadm: partition table exists on /dev/sdf1 but will be lost or meaningless after creating array

mdadm: size set to 3907072K

mdadm: largest drive (/dev/sdc1) exceeds size (3907072K) by more than 1%

Continue creating array?

NOTE: If you get an error that the device is busy then remove 'dmraid'. In a Debian system use the command 'sudo apt-get remove dmraid' and when completed, reboot the system. After the system restarts try the 'mdadm' command again. You also have to use 'umount' to unmount the drives.

Answer 'y' to the question to 'Continue creating array?' and the following should appear:

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md0 started.

The RAID Array is created and running, but not yet ready for use.

Prepare md0 for use

You may look around, but the drive md0 is not to be found. Open the GParted application and you will see it there ready to be prepared for use.

By selecting /dev/md0 you will get an error that no Partition Table exists on the RAID Array. Select Device from the top menu and then 'Create Partition Table…'. Specify your partition type and click APPLY.

Now, create the Partition and select your file format to be used. It is suggested to use either EXT3 or EXT4 for formatting the Array. You may also want to select the RAID Flag. Add the Partition scheme. I gave a Label of “RAID10” and then clicked APPLY to make all the selected changes. The drives should be formatted as selected and the RAID Array is ready to be mounted for use.

Mount RAID Array

Before closing GParted look at the Partition name as shown in Figure 01. My Partition name is '/dev/md0p1'. The partition name is important for mounting.

FIGURE 01

You may be able to simply mount 'RAID10' as I was able to do.

If the mount does not work, then try the following: go to your '/media' folder and as ROOT create a folder, such as RAID, to be used as a mount point. In a terminal use the command 'sudo mount /dev/md0p1 /media/RAID' to mount the RAID Array as the media device named RAID.

Now you must take ownership of the RAID Array with the command:

sudo chown -R jarret:jarret /media/RAID

The command uses my username (jarret) and group name (jarret) to take ownership of the mounted RAID Array. Use your own username and mount point.

Now, when I write to the Raid Array my time to write a 100 MB file is an average of 11.33 seconds. The speed to write is now 8.83 MB/sec. Reading a 100 MB file from the RAID Array takes an average of 4 seconds which makes a speed of 25 MB/sec.

As you can see the speed has dramatically changed (write: 1.33 MB/s to 9.09 MB/s and read: 5.56 MB/s to 25 MB/s).

NOTE: The speed may be increased by placing each drive on a separate USB ROOT HUB. To see the number of ROOT HUBs you have and where each device is located use the command 'lsusb'.

Auto Mount the RAID Array

To have the RAID Array auto mount after each reboot is a simple task. Run the command 'blkid' to get the needed information from the RAID Array. For example, to run it after I mounted my RAID mount point, I would get the following:

/dev/sda2: UUID="73d91c92-9a38-4bc6-a913-048971d2cedd" TYPE="ext4"

/dev/sda3: UUID="9a621be5-750b-4ccd-a5c7-c0f38e60fed6" TYPE="ext4"

/dev/sda4: UUID="78f175aa-e777-4d22-b7b0-430272423c4c" TYPE="ext4"

/dev/sda5: UUID="d5991d2f-225a-4790-bbb9-b9a48e691061" TYPE="swap"

/dev/sdb1: LABEL="My Book" UUID="54D8D96AD8D94ABE" TYPE="ntfs"

/dev/sdd1: UUID="7446-789A" TYPE="vfat" LABEL="Green"

/dev/sde1: LABEL="ORANGE" UUID="B30C-4661" TYPE="vfat"

/dev/sdf1: LABEL="ORANGE" UUID="77FC-1664" TYPE="vfat"

/dev/sdc1: UUID="7889-96F6" TYPE="vfat" LABEL="LEXAR"

/dev/md0p1: LABEL="RAID10" UUID="149de165-38e1-40c5-9ee0-0bdb89277fd4" TYPE="ext4"

The needed information is the line with the partition '/dev/md0p1'. The Label is RAID10 and the UUID is '149de165-38e1-40c5-9ee0-0bdb89277fd4' and the type is EXT4.

Edit the file '/etc/fstab' as ROOT using an editor you prefer and add a line similar to 'UUID= 149de165-38e1-40c5-9ee0-0bdb89277fd4 /media/RAID ext4 defaults 0 0'. Here the UUID is used from the blkid command. The mount point of '/media/RAID' shows where the mount point is located. The drive format of ext4 is used. Use the word 'defaults' and then '0 0'. Be sure to use a TAB between each set of commands.

Your RAID 10 drive Array should now be completely operational for use.

Removing the RAID Array

To stop the RAID Array you need to unmount the RAID mount point then stop the device 'md0p1' as follows:

sudo umount -l /media/RAID

sudo mdadm --stop /dev/md0p1

Once done you need to reformat the drives and also remove the line from /etc/fstab which enabled it to be be automounted.

Fixing a broken RAID Array

If one of the drives should fail you can easily replace the drive with a new one and restore the data to it.

Now, let's say from the above drive sde1 fails. If I enter the 'lsblk' command the drive sdb1 and sdd1 are shown and still listed as 'md0p1'. The device RAID is still accessible and usable. The Fault Tolerance is unavailable since only the two drives remain.

To determine the faulty drive use the command: 'cat /proc/mdstat' to get a similar result of:

md0 : active raid10 sdf1[3] sde1[2](F) sdd1[1] sdc1[0] 7814144 blocks super 1.2 512K chunks 2 near-copies [4/3] [UU_U]

unused devices: <none>

The line that shows (F) shows the drive which was the failure – sde1, so you know to remove the failed drive and replace it.

To fix a broken RAID Array replace the failed drive with a new drive that has a minimum space of the previous drive. After adding a new drive run 'lsblk' to find the address of the new drive. Say, for example it is 'sdg1'; so, unmount the new drive by using its label with the command 'umount /media/jarret/label'.

To join the new drive to the existing broken RAID 1 Array, the command is:

sudo mdadm --manage /dev/md0p1 --add /dev/sdg1

The RAID partition name is 'md0p1', as shown previously in GParted. The device to add is 'sdg1'.

To see the progress of the rebuild use the command 'cat /proc/mdstat' to see something like:

md0 : active raid10 sdg1[4] sdf1[3] sde1[2](F) sdd1[1] sdc1[0]

7814144 blocks super 1.2 512K chunks 2 near-copies [4/3] [UU_U]

[>....................] recovery = 1.0% (40704/3907072) finish=9.4min speed=6784K/sec

unused devices: <none>

At any time the command 'cat /proc/mdstat' can be used to see the state of any existing RAID Array.

If you must remove a drive you can tell the system that the device has failed. For instance, if I wanted to remove drive sde1 because it was making strange noises and I was afraid it would fail soon, the command would be:

sudo mdadm --manage /dev/md0p1 --remove /dev/sde1

The command 'cat /proc/mdstat' should show the Array has failed. Before you just unplug the device you need to tell the system to remove it from the Array.

You can now remove the drive, add a new one and rebuild the Array as described above.

Hope this helps you understand the RAID 10 Arrays. Enjoy your RAID Array!

Last edited: